Production-Grade Prompting with the Anthropic API

Concepts, Parameters, Streaming, Caching, and a Real Enterprise Example

Prompting in Anthropic (Claude) is not just about writing good instructions.

In production systems, prompts behave more like specifications than conversations.

This article explains how prompting actually works in Anthropic, how to design reliable and compliant prompts, and how to use JavaScript to build real systems—ending with a production-grade sentiment analysis prompt.

1. How Anthropic Prompting Really Works

Anthropic uses a message-based API.

Each request contains the entire conversation state.

Claude does not remember anything between API calls.

Mental model

You → send full conversation

Claude → continues from that context

Every request must include:

Who the assistant is

What rules apply

What the user just said

Any images or documents

2. Messages and Roles (Core Concept)

Anthropic uses three roles:

| Role | Purpose |

system | Hard rules, behavior, constraints |

user | Input data or tasks |

assistant | Previous model replies (conversation priming) |

Example

messages: [

{ role: "user", content: "Hello! Only speak Spanish." },

{ role: "assistant", content: "Hola!" },

{ role: "user", content: "How are you?" }

]

Claude will answer in Spanish because:

Conversation history establishes the rule

Claude prioritizes consistency

Production rule

Put non-negotiable rules in

system, not in conversation history.

3. System vs User Instructions (Why It Matters)

| Instruction Type | Reliability |

| System | Strong, persistent |

| User | Soft, overrideable |

| Assistant | Priming only |

Production-safe example

system: "You must respond only in Spanish."

This survives:

Long conversations

Truncated history

User attempts to override

4. Temperature: Controlling Randomness

Temperature controls how predictable Claude’s word choices are.

It does not control intelligence.

| Temperature | Behavior | Use case |

| 0.0–0.2 | Deterministic | JSON, analytics |

| 0.3–0.5 | Controlled | Summaries |

| 0.6–0.8 | Creative | Writing |

| 0.9+ | Unstable | Rarely useful |

Example

temperature: 0.0

Use this when:

Output must be parsed

Compliance matters

Decisions depend on correctness

5. stop_sequences: When the Model Must Stop

stop_sequences tells Claude when to stop generating.

stop_sequences: ["\nUser:"]

Claude stops immediately when it reaches that string.

Common uses

Prevent role leakage

End JSON cleanly

Stop agent loops

Key rule

Stop sequences are guards, not fixes for bad prompts.

6. Streaming Responses (Real-Time Output)

Streaming lets Claude send text incrementally.

with client.messages.stream(...) as stream:

for text in stream.text_stream:

print(text)

Why streaming exists

Faster perceived response

Better UX

Long outputs

When NOT to stream

JSON APIs

Tool calls

Strict schema validation

Production pattern

let buffer = "";

for (const chunk of stream.text_stream) {

buffer += chunk;

process.stdout.write(chunk);

}

// buffer now contains the full response

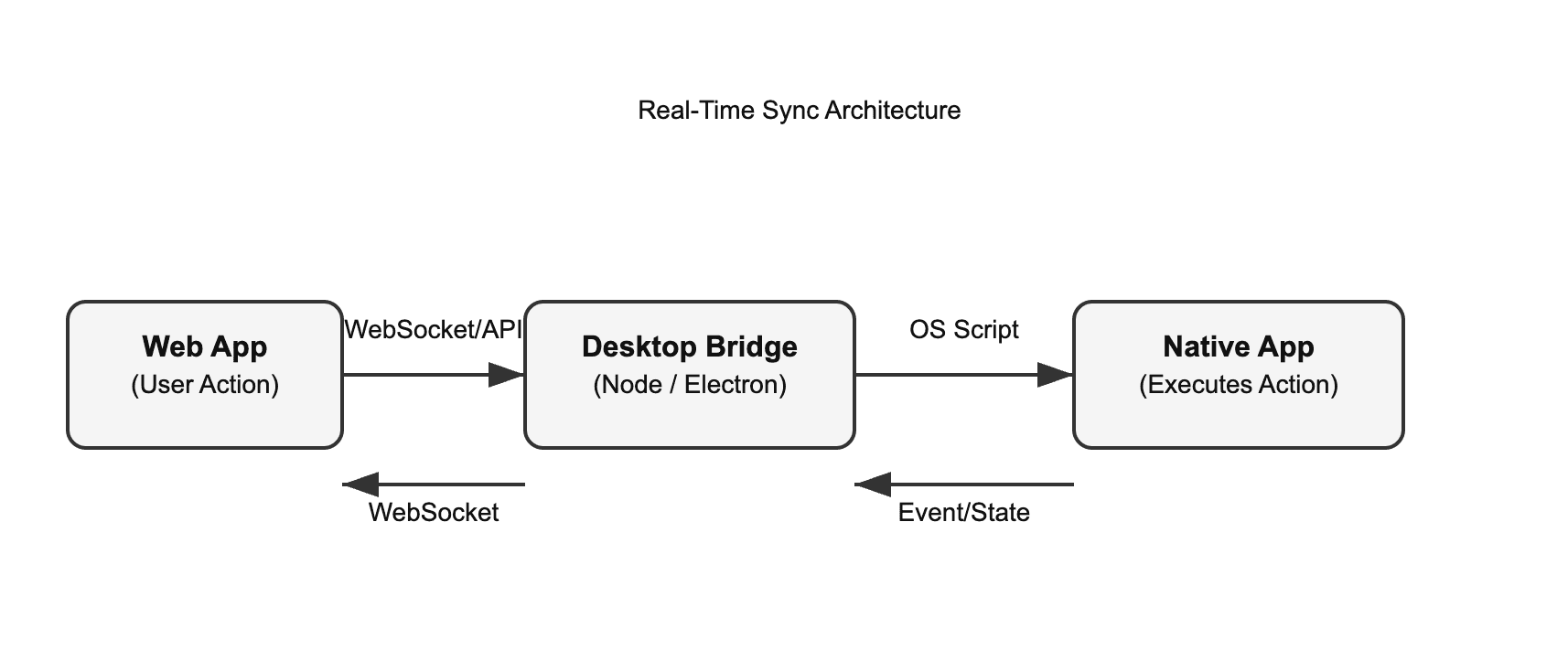

7. Multimodal Prompting (Images + Text)

Anthropic allows image + text together.

{

role: "user",

content: [

{ type: "image", source: {...} },

{ type: "text", text: "Count the containers." }

]

}

Rules

contentmust be an arrayImage first, instruction second

Images only in

userrole

8. Prompt Caching and cache_control

Anthropic optimizes performance by caching repeated prompt prefixes.

This is good for:

System prompts

Templates

Policies

But dangerous for:

User data

Transcripts

Images

PII

9. cache_control: { type: "ephemeral" }

"cache_control": { "type": "ephemeral" }

This means:

Use this content once. Do not cache. Do not reuse. Do not persist.

When to use it

| Data | Ephemeral |

| User input | ✅ |

| Call transcripts | ✅ |

| Images | ✅ |

| Customer feedback | ✅ |

Why it matters

Prevents data reuse

Improves compliance posture

Reduces audit risk

10. JavaScript Example: Safe Prompt with Ephemeral Data

import Anthropic from "@anthropic-ai/sdk";

const client = new Anthropic({

apiKey: process.env.ANTHROPIC_API_KEY

});

const response = await client.messages.create({

model: "claude-3-5-sonnet-20240620",

max_tokens: 200,

temperature: 0.0,

system: "You are a deterministic analysis engine.",

messages: [

{

role: "user",

content: [

{

type: "text",

text: "The service was slow and frustrating.",

cache_control: { type: "ephemeral" }

}

]

}

]

});

console.log(response.content[0].text);

11. What Makes a Prompt Production-Grade

A production prompt has:

Clear role definition

Explicit constraints

Defined output format

Fail-closed behavior

Low temperature

No exposed reasoning

Safe handling of sensitive data

Good prompts are specifications, not conversations.

12. Production-Grade Sentiment Analysis Prompt

This prompt follows all best practices discussed above.

Prompt

Analyze the sentiment of the following customer message.

Rules:

- Classify sentiment as POSITIVE, NEGATIVE, or NEUTRAL

- Do not include personal data

- Do not infer intent beyond the text

- Limit text fields to 100 characters

Output:

Return ONLY valid JSON.

No explanations.

Schema:

{

"sentiment": "POSITIVE | NEGATIVE | NEUTRAL",

"confidence": 0.0-1.0,

"summary": "string"

}

If sentiment cannot be determined, return:

{

"sentiment": "NEUTRAL",

"confidence": 0.0,

"summary": "Insufficient data"

}

13. Sentiment Analysis: Full JavaScript Example

const response = await client.messages.create({

model: "claude-3-5-sonnet-20240620",

max_tokens: 150,

temperature: 0.0,

system: "You are a strict sentiment classification engine.",

messages: [

{

role: "user",

content: [

{

type: "text",

text: "Support was slow and unhelpful.",

cache_control: { type: "ephemeral" }

}

]

}

]

});

console.log(response.content[0].text);

14. Final Takeaway

Anthropic prompting works best when you treat prompts like contracts: explicit, constrained, deterministic, and safe.